The Fourth Pillar: Why CFOs Need to Rethink How They Account for AI

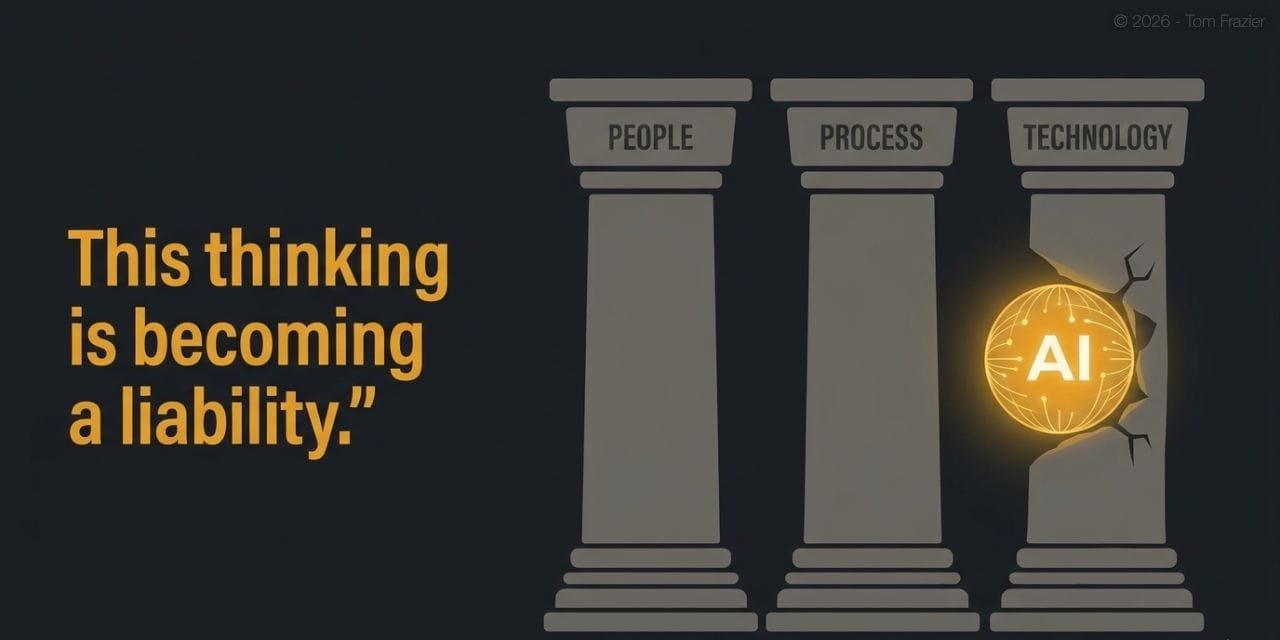

For decades, companies have measured technology investment against three pillars: people, process, and technology. AI breaks that model. Here's what needs to change — before the write-downs arrive.

For decades, businesses have been built and evaluated against people, process, and technology. When AI arrived, most organizations did what was convenient — they bucketed it under technology. That was understandable as a starting point.

This thinking is becoming a liability.

The hidden cost problem in AI isn't primarily about overspending. It's about a fundamental unit economic mismatch that most finance leaders haven't been forced to confront yet — because the bills haven't come due at scale. They will.

The Legibility Problem

Traditional cloud costs, for all their complexity, have one critical virtue: they're legible. You can measure your infrastructure bill against customers acquired, product usage, transaction volume, or seats. The numbers move together in roughly predictable ways. Cost models are uncomfortable, but they're traceable. When spend goes up, you can usually explain why in business terms.

AI built on frontier models breaks that legibility entirely.

The fundamental unit of consumption for a frontier model is the token. And tokens have no natural relationship to customers acquired, revenue generated, or value delivered. A CFO approving a frontier model deployment is signing a cost commitment denominated in a currency that doesn't appear anywhere else on the P&L — and has no obvious exchange rate into business outcomes.

You can't govern what you haven't properly named. And right now, most companies haven't named AI correctly.

This is why the write-down and stranded asset risk the industry is beginning to see isn't primarily an overspending problem. It's a structural misclassification problem. Companies are making four-dimensional cost commitments and trying to govern them with three-dimensional tools.

The Framework That's Failing

The people + process + technology framework has been the backbone of enterprise cost governance for a generation. It works because each pillar maps cleanly to a cost type, a governance model, and a set of levers for optimization:

Headcount, compensation, contractors. Scales with org growth. Optimized through hiring discipline and org design.

Operations, workflows, compliance overhead. Scales with complexity. Optimized through automation and standardization.

Infrastructure, software, cloud. Scales with workload. Optimized through architecture decisions and contract discipline.

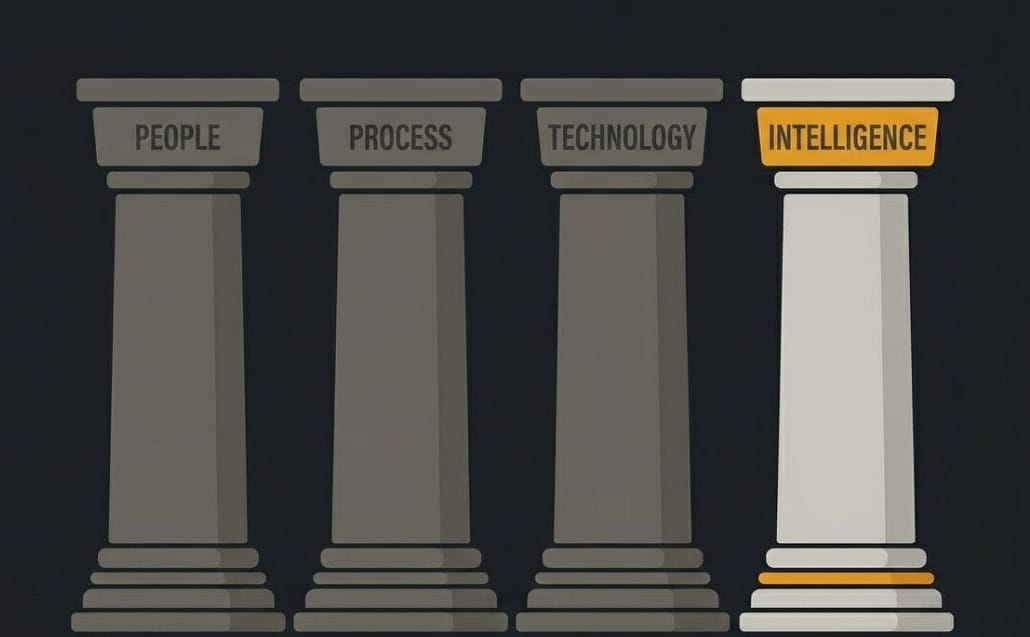

new — Intelligence

AI inference, model costs, autonomous agent compute. Scales with consumption — often disconnected from business outcomes. Requires its own governance model.

The problem is not that AI is expensive. The problem is that when AI costs are bucketed under "technology," they inherit a governance model that wasn't designed for them. Technology costs are largely predictable and tied to infrastructure decisions. AI inference costs — especially on frontier models — are consumption-driven, difficult to forecast, and structurally decoupled from the business metrics that justify the spend.

Two Paths to Resolution

There are two practical approaches to closing the unit economic gap, and they're not mutually exclusive.

Path One — Architectural

Deploy open-source models on your own cloud infrastructure instead of defaulting to frontier model APIs. You trade tokenomic exposure for compute exposure. Compute scales the way cloud infrastructure always has: predictably, against workload, with optimization levers you actually control. This isn't the right answer for every use case — frontier models have real capability advantages for certain tasks. But for high-volume, well-defined workloads, the unit economic argument for open-source is often decisive.

Path Two — Structural

Redesign how you model cost and value. This is the harder path, but the more important one. Intelligence needs to be extracted from the technology bucket and treated as a standalone pillar with its own governance framework, its own unit economics, and its own ROI model. That means asking different questions during project approval: not just "what does this cost?" but "what is the token-to-outcome exchange rate at scale, and how does it change as usage grows?"

Can your finance team answer this question right now?

What does 'one unit of AI' cost relative to 'one unit of business value' delivered?

If the answer is "we'll have to look into that," the fourth pillar doesn't exist in your organization yet. It's being subsidized by the technology budget, which means the true cost is invisible — until it isn't.

What Good Governance Looks Like

Treating intelligence as a fourth pillar isn't just an accounting change. It's an operating philosophy shift. Here's what it looks like in practice:

- Separate budget lines. AI inference costs should have their own budget line items, not roll into cloud or software spend. This forces visibility at the point of approval, not after the fact.

- Outcome-denominated metrics. Every significant AI deployment should have an explicit unit economic model that translates inference costs into business outcomes — cost per customer interaction, cost per qualified lead processed, cost per decision automated. If you can't build that model before launch, that's a signal, not a detail to figure out later.

- Commitment discipline. Frontier model contracts and cloud AI commitments should go through the same scrutiny as any major infrastructure commitment. The fact that they're priced in tokens rather than servers doesn't make them less of a multi-year financial commitment.

- Architecture accountability. The choice between frontier model APIs and self-hosted open-source models should be a finance-informed decision, not purely an engineering preference. The cost structure implications are material enough to warrant it.

The Window Is Closing

Most companies are currently in what will eventually be recognized as the easy phase of enterprise AI adoption — where costs are small enough that the misclassification doesn't hurt visibly, and where the organizational enthusiasm for AI investment makes rigorous unit economics feel like friction.

That phase ends when commitments scale. When it does, the companies that built proper governance frameworks early — that treated intelligence as a first-class pillar with its own cost discipline — will have optimization levers and financial visibility that others won't. The companies that kept AI inside the technology bucket will be untangling that debt while managing the write-downs at the same time.

The framework update isn't complicated. People, process, technology, and intelligence. Four pillars, four governance models, four sets of unit economics. The hard part isn't the concept. It's being willing to make the change before the cost of not doing it becomes undeniable.

The write-downs and stranded assets come later. The root cause is committing to AI infrastructure before you've defined what business outcome you're actually buying.