The Binary Is Readable Now

What happens to IP protection in the age of AI? Software intellectual property law evolved partly around a practical assumption: Compiled code was difficult to reverse engineer. That is no longer true.

I spent more than a decade of my career in information security. When I was studying for my undergraduate degree, formal concentrations in computer security didn't even exist — I had to construct my own, pulling together cryptography, systems, and network courses into something coherent before anyone had thought to package it. Thanks for letting me do that RIT! That DIY approach to the field probably explains why I still find this stuff genuinely fascinating. Security has changed almost beyond recognition since the early days of computing, and it keeps changing.

So when Mark Russinovich, CTO of Microsoft Azure, ran a small experiment recently, it caught my attention immediately.

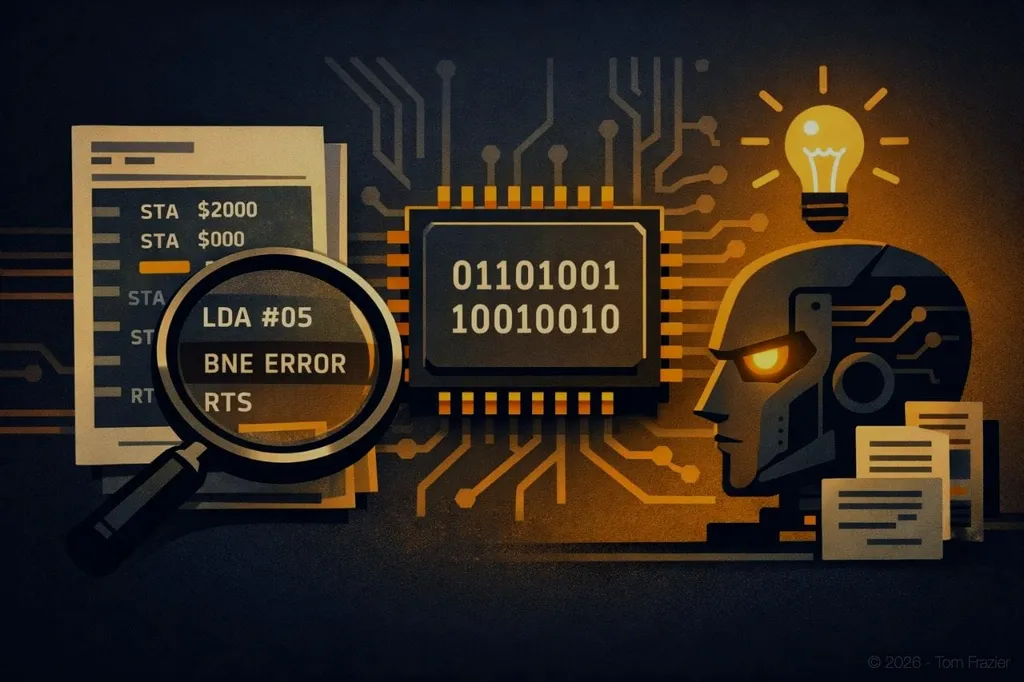

In May 1986, Mark Russinovich — now CTO of Microsoft Azure — wrote a utility called Enhancer for the Apple II, a tool written in 6502 machine language that extended Applesoft BASIC's GOTO, GOSUB, and RESTORE commands to accept variables and expressions, not just line numbers. Recently, he fed the raw binary from that forty-year-old program to Claude Opus 4.6 — no source code, no context, just machine instructions printed in a magazine decades ago.

The model decompiled the machine language and found several security issues, including a case of "silent incorrect behavior" where, if the destination line was not found, the program would set the pointer to the following line or past the end of the program instead of reporting an error.

The fix: check the carry flag, which is set when a line is not found, and branch to an error.

The capability itself isn't new. Reverse engineering tools have existed for decades. What's new is that this took minutes, required no specialist, and was essentially free.

As Russinovich put it:

"We are entering an era of automated, AI-accelerated vulnerability discovery that will be leveraged by both defenders and attackers."

The Attack Surface Just Expanded

The limiting factor in firmware security has always been analyst time. Auditing a binary is slow, expensive, and requires deep expertise. That constraint is eroding fast.

Anthropic's Frontier Red Team has been stress-testing Claude's security capabilities systematically: entering it in competitive Capture-the-Flag events, partnering with Pacific Northwest National Laboratory to experiment with using AI to defend critical infrastructure, and refining Claude's ability to find and patch real vulnerabilities in code.

The results are striking. AI security startup AISLE discovered all 12 zero-day vulnerabilities announced in OpenSSL's January 2026 security patch, including a rare high-severity stack buffer overflow that is potentially remotely exploitable. OpenSSL is among the most scrutinized cryptographic libraries on the planet, with fuzzers running against it for years. The AI found what those fuzzers were not designed to find.

In February 2026, Anthropic productized this capability. Claude Code Security, now available in limited research preview for Enterprise and Team customers, scans codebases for security vulnerabilities and suggests targeted patches for human review — catching complex, context-dependent issues that rule-based static analysis tools typically miss, such as flaws in business logic or broken access control. Using Claude Opus 4.6, the team found over 500 vulnerabilities in production open-source codebases — bugs that had gone undetected for decades, despite years of expert review.

The market reacted immediately. On release day, JFrog dropped nearly 25%. On February 23, 2026 — the first full market day following the release — CrowdStrike, Datadog, and Zscaler fell around 11%; Fortinet and Okta fell roughly 6%; and SentinelOne and Palo Alto Networks fell approximately 5% and 3%, respectively. The Global X Cybersecurity ETF reached its lowest level since November 2023.

Whether the selloff was rational is a separate question.

Wedbush analysts argued that the reaction was driven by "AI Ghost Trade" fears and would prove to be the wrong call for clear winners like Palo Alto, CrowdStrike, and Zscaler. Analysts noted that Claude Code Security does not perform active analysis or conduct live countermeasures, meaning that platforms relying on behavioral analytics, threat intelligence, or real-time monitoring remain strategically central — including endpoint detection and response, network security, and security operations centers.

But the market's fear reflects something real even if its magnitude was off. Investors understood that a capability which automates a meaningful slice of what security engineers do — at near-zero marginal cost — changes the pricing power equation. As one analyst letter put it bluntly:

"When AI reproduces employees' work, pricing power shifts to the buyer."

What Happens to IP Protection Through Obscurity

Software intellectual property law evolved partly around a practical assumption:

Compiled code was difficult to reverse engineer. Shipping binaries instead of source offered real protection.

The effort required to reconstruct implementation details was high enough to serve as a meaningful barrier. That barrier has been collapsing for years. AI is accelerating the collapse.

Courts are already grappling with the consequences. In March 2025, the U.S. Court of Appeals for the D.C. Circuit affirmed that human authorship is a bedrock requirement for copyright registration, and that an AI system cannot be deemed the author of a work.

The U.S. Copyright Office's January 2025 report confirmed this position: fully AI-generated outputs lack human authorship and are therefore not copyrightable, while works where a human retains sufficient creative control may qualify — determined on a case-by-case basis.

The more consequential litigation concerns training data, derivative works, and what obligations arise when AI systems surface implementation details from proprietary code. These cases are moving faster than legislation. Judicial decisions are currently providing more practical guidance on AI and intellectual property than any statute.

The Open Source Argument

Open source software has always carried an implicit theory of competition: the code itself is less valuable than execution. Shipping source doesn't mean giving away your advantage — it means acknowledging that your advantage doesn't live in the code alone. It lives in the team, the infrastructure, the data, the integrations, the speed of iteration.

When AI can approximate the reverse engineering that once required months of specialist work, this argument gets stronger.

Protecting implementation details through binary distribution becomes more expensive and less reliable as a strategy. The honest question companies should be asking is not "how do we prevent our implementation from being understood?" but "what actually creates durable competitive advantage?"

In most cases, the answer is not the code. It's the data. The deployment infrastructure. The customer relationships. The ability to ship improvements faster than a competitor can analyze what you've already built. These are things that don't become more legible when AI gets better at reading binaries.

The Asymmetry Problem

None of this is symmetric. AI-assisted analysis helps defenders discover vulnerabilities before they're weaponized — but it also lets attackers scale vulnerability discovery in ways that weren't previously possible. The same model improvements behind Claude Code Security are available to anyone with API access. The window between discovery and adoption of patches is where attackers operate.

Anthropic's communications lead told VentureBeat the company built Claude Code Security to make defensive capabilities more widely available, "tipping the scales towards defenders" — while being equally direct about the tension:

"The same reasoning that helps Claude find and fix a vulnerability could help an attacker exploit it."

By 2026, Gartner forecasts that more than 80% of enterprises will have used generative AI APIs or deployed AI-enabled applications in production environments, up from less than 5% in 2023. Gartner The deployment is happening faster than governance.

Regulators are catching up. Italy's data protection authority issued a €15 million fine against OpenAI — the first generative AI-related case brought under GDPR — finding that the company trained ChatGPT using users' personal data without a proper legal basis.

The FTC's Operation AI Comply has continued into 2025 under the new administration, with enforcement actions against companies making deceptive claims about AI-powered products.

The warning period is over.

What This Means for How You Build

If your competitive moat depends on keeping your implementation secret, you're making a bet that AI won't keep getting better at reading binaries.

That's a bad bet.

As CSIS analysts put it, Claude Code Security does not signal the collapse of any industry — but it does mark a structural shift. As AI-driven code analysis accelerates vulnerability discovery, competitive advantage will flow to organizations that prioritize new security tasks and adapt to an increased tempo of cyber competition.

The companies that will do well in this environment are building systems where advantage compounds through execution rather than secrecy — fast iteration cycles, proprietary data flywheels, infrastructure that improves with use. By the time a competitor understands what you built, you've already shipped the next version.

This isn't a new lesson. It's the same lesson every technology transition teaches, applied to a new constraint.

The binary is readable now. The question is whether you're building as if that's true.