Proof of 'Proof of': Agentic AI's Next Frontier

Agentic AI can do the work. The bottleneck now is proof — of identity, authority, and action. Regulators are already drawing lines. The infrastructure to solve it exists today.

The agent demos are impressive. The enterprise deployments are real. The commerce use cases are coming fast. But somewhere between "watch this agent complete a task autonomously" and "allow this agent to act on your behalf across systems, spend your money, and make decisions that affect real people," there is a hard stop.

The gap isn't capability... the agents can do the work. The hard stop is proof.

- Can this agent prove it is what it says it is?

- Can it prove it has the authority to act?

- Can it prove it has the funds to transact?

- Can it prove — verifiably, to a regulator, an auditor, or a counterparty — that everything it did happened in the right order, with the right permissions, for the right reasons?

Right now, for most agentic deployments, the answer to all four questions is no. That gap is the next bottleneck, and the window to solve it before regulators force the issue is closing fast.

The Credentialing Problem No One Fixed

Traditional software systems deal in API keys, OAuth tokens, and service accounts. These mechanisms were built for static, human-initiated workflows. An employee logs in. A service authenticates. A token gets issued. The scope is fixed at issuance time.

Agents break all of that.

An autonomous agent doesn't just authenticate once. It acts repeatedly, dynamically, across organizational boundaries, often as part of multi-agent chains where Agent A dispatches Agent B, which instructs Agent C, none of which have a human in the loop approving each step.

The identity gap is structural. When Agent A receives a request from Agent B, there is no standardized mechanism for B to prove its identity, capabilities, or authorization chain. API keys conflate authentication with authorization and provide zero provenance information. That's adequate when a human is watching. It's a liability when no one is.

The same problem scales into payments. If an agent is authorized to spend $50 to complete a task, can it prove to the counterparty that the authorization is real, current, and cryptographically bound to a known principal? Not with existing infrastructure. Which means every agentic commerce scenario — every AI-negotiated contract, every automated procurement decision, every agent-to-agent service exchange — runs on trust that can't actually be verified.

The Regulatory Forcing Function

This would be a solvable-when-convenient problem if regulators weren't already drawing lines. They are, and the timelines are concrete.

The EU AI Act, Regulation 2024/1689, requires high-risk AI systems to implement continuous risk management (Article 9), maintain tamper-evident technical documentation (Article 11), preserve records of system operation (Article 12), ensure human oversight capability (Article 14), and complete third-party conformity assessment — not self-certification — before deployment (Article 43). Penalties for non-compliance scale to €35 million or 7% of global annual revenue for the most serious violations.

These rules are fully in effect as of August 2026.

If an organization can't trace an agent's actions and doesn't have proper control over its authority, it can't prove to regulators that the system is operating safely or lawfully. That's not a theoretical risk. It's the enforcement posture the EU AI Act establishes. Deployers of high-risk AI systems must keep logs generated by the AI for at least six months. For agentic systems running complex multi-step workflows, that requirement is meaningless without cryptographic integrity — logs that can be modified are not logs that satisfy Article 12.

Singapore moved first on agentic AI specifically. On January 22, 2026, Singapore's Infocomm Media Development Authority launched the Model AI Governance Framework for Agentic AI — the world's first governance framework specifically designed for AI agents capable of autonomous planning, reasoning, and action. The framework is voluntary but consequential: it provides guidance for all organizations deploying agentic AI in Singapore, focusing on four core dimensions — assessing and bounding risks upfront, making humans meaningfully accountable, implementing technical controls and processes, and enabling end-user responsibility.

In agentic systems, identity management is explicitly required to track individual agent behavior and establish accountability chains.

Singapore's framework is a living document. The binding legal obligations will follow.

In the US, Colorado went first among states. The Colorado Artificial Intelligence Act, originally scheduled for February 2026 and delayed to June 30, 2026, targets algorithmic discrimination in AI systems that make or substantially influence consequential decisions across employment, housing, credit, healthcare, education, insurance, and legal services. The Act explicitly cites NIST AI RMF and ISO/IEC 42001 as recognized models for responsible AI governance — organizations demonstrating alignment with these frameworks can qualify for safe harbor protections.

More states are in motion. More federal action is coming. The trajectory is uniform: prove the system, prove the process, or face the consequences.

NIST AI RMF and ISO/IEC 42001 are now the operational baseline across multiple jurisdictions. Together they require continuous risk management, documented oversight, post-deployment monitoring, and evidence that a human can intervene. For agentic deployments, that last requirement is the hard one — and it depends entirely on the infrastructure underneath.

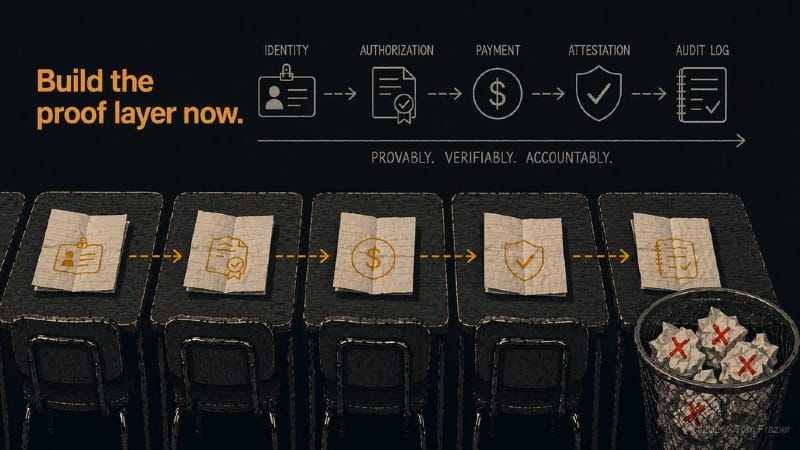

Three Proofs, One Infrastructure Problem

The proof requirements for autonomous agents break into three categories, each with its own technical challenges and none of them solved by the current stack.

Proof of identity.

An agent needs to prove, cryptographically, that it is what it claims to be — including what it's authorized to do, which system it belongs to, what its capabilities are, and who is ultimately accountable for its actions. Decentralized Identifiers (DIDs) are the right architecture here. A DID is a W3C-standard URI that encodes a public key directly in the identifier, requiring no central registry. Paired with Verifiable Credentials (W3C VC standard), an agent can carry signed, machine-verifiable claims about its identity and authority — claims that any counterparty can verify independently without calling back to an issuer.

The cryptographic primitive that makes this work is Ed25519, which produces 64-byte deterministic signatures with sub-millisecond performance and is now the standard across the DID ecosystem.

An agent's identity token signed with Ed25519 over a JSON object gives you tamper-evident, machine-verifiable proof of identity at the speed agentic workloads require.

UCAN (User Controlled Authorization Networks) extends this further. UCANs are capability-based delegation tokens that create verifiable chains of authorization — Agent A can delegate a specific, attenuated subset of capabilities to Agent B, which can further delegate to Agent C, and any party in the chain can verify the full provenance of the authorization without a central authority issuing or validating it. For multi-agent architectures, this is the correct approach. It replaces "trust the platform" with "verify the chain."

Proof of funds.

Once an agent has identity, the next problem is payment. Every agentic commerce scenario — an agent purchasing API access, negotiating a service, executing a supply chain transaction — requires the agent to prove it has the funds and the authorization to transact, and to do so instantly without human approval in the loop.

This is what x402 solves.

x402 is an HTTP-native, internet-native payment protocol enabling autonomous agents and APIs to execute micropayments per request, without human intervention or account setup — payment becomes a protocol primitive embedded directly in the HTTP lifecycle. The dormant HTTP 402 "Payment Required" status code — reserved since 1997 but never given a practical implementation — now has one. In September 2025, Coinbase and Cloudflare launched the x402 Foundation, with x402 enabling any API or web service to require payment before serving content, allowing agents to autonomously pay for data, computation, and services from other agents.

The economics work because of stablecoins and L2 infrastructure. Unlike traditional payment processors fees around $0.30 plus 2.9% per transaction, x402 transaction fees can run around $0.0001 — making true pay-per-use viable down to fractions of a cent. USDC as the settlement currency eliminates volatility risk. The blockchain provides finality: once confirmed, the transaction cannot be reversed or disputed, which matters enormously for micropayment models where chargeback risk would make the economics impossible.

So while it doesn't resolve the issues related to governments abilities to restrict transactions it is the most robust agent transaction mechanism to date by a wide margin.

Weekly x402 volume rose from 46,000 to 930,000 transactions in a single month in late 2025 — a roughly 1,000% jump. This is not a concept. It's already operating at scale.

Proof of action.

The third proof category is the hardest and the most consequential: a verifiable, tamper-evident record of everything the agent did, why it did it, in what order, and under whose authorization. This is what Article 12 of the EU AI Act demands. This is what "human oversight" means in practice when the agent is running faster than any human can watch.

Hash-chained audit logs are a great solution. Each entry in the log includes the SHA-256 hash of the prior entry — so any modification to any record in the chain invalidates everything that follows it. Merkle tree aggregation allows batch anchoring: the Merkle root of a set of audit records can be published on-chain (perhaps Base L2 via the Ethereum Attestation Service but there are others), creating an immutable timestamped proof of the log's state at that moment.

The blockchain anchoring is optional for day-to-day operations but essential for regulatory defense.

It converts an internally-maintained log into an externally-verifiable artifact.

What "Unified" Actually Looks Like

The Attestix project (open-source, Apache 2.0, available at github.com/VibeTensor/attestix) is the most complete public implementation of unified agent attestation infrastructure I've seen. It is still very early days for agentic attestation systems. So while this project may not be the final result, it is worth examining because it illustrates what the full stack actually looks like when assembled correctly.

Attestix operates as a MCP server — which means any MCP-compatible agent can invoke its toolset without modifying core agent logic. A few design choices are worth noting.

- The system actively rejects self-assessment for high-risk AI systems — if Article 43 requires third-party conformity assessment, self-certification fails at the infrastructure level.

- The reputation model uses 30-day exponential decay, which means recent behavior counts more than historical behavior and outdated reputation scores don't persist indefinitely.

- The Ed25519 sign/verify cycle benchmarks at 0.28ms — fast enough to sign every agent action in real time without becoming a performance bottleneck.

- The hash-chained log structure means any modification to any record fails verification — the kind of tamper evidence Article 12 requires isn't aspirational, it's cryptographically enforced.

The fact that this is built on MCP matters as it has rapidly become the de facto standard for agent tool access. Google's A2A protocol addresses agent-to-agent messaging. Neither protocol addresses identity, credential exchange, or compliance.

Attentix maybe one of the first but they definitely won't be last nor probably not the final agreed implementation. However, this is the pattern that will likely emerge across the infrastructure stack: core agent communication protocols handle routing and tool invocation; attestation infrastructure handles trust, compliance, and verifiability as a composable layer underneath.

The OpenClaw Context

I've been running OpenClaw since its early open-source releases — one of the first hands-on operators of the platform. The identity and attestation problem shows up immediately in any serious multi-agent deployment. When you're orchestrating agents across tasks, you need to know: which agent took which action, under what authorization, at what time, and can that record be reconstructed and verified after the fact?

Right now, the answer in most deployments is "approximately." Approximately, because the logs exist but aren't cryptographically chained. Approximately, because authorization is tracked by platform convention, not by verifiable credential. Approximately, because payments are handled by wrapper accounts that obscure the actual payment trail. Approximately is fine for demos. It fails for production deployments in regulated environments.

The attestation layer — DID-based identity, UCAN delegation chains, x402 for payments, hash-chained audit logs anchored on-chain — converts "approximately" into "provably." That's the transition the industry needs to make, and the regulatory timelines make it non-optional.

Why Now Is the Right Time

The problem has existed since the first autonomous agent shipped.

What's changed and why is this important now?

It is the convergence of three factors that make the solution both necessary and viable at the same time.

- The first is regulatory compression. The EU AI Act, Singapore's MGF, Colorado's SB 24-205, and NIST AI RMF alignment requirements across both jurisdictions create overlapping compliance obligations that all resolve to the same underlying need: verifiable identity, verifiable authorization, verifiable audit trail. These regulations don't prescribe a specific technical implementation. They describe outcomes — "the system can be understood," "records can be verified," "human oversight is possible" — and the attestation stack described here is how those outcomes get built.

- The second is infrastructure maturity. Ed25519 is fast and standardized. W3C DIDs and Verifiable Credentials are finalized specifications with active tooling. UCAN is in production. x402 is live with measurable transaction volumes. The Ethereum Attestation Service on Base L2 provides on-chain anchoring at near-zero cost. Every primitive needed to build the full attestation stack exists today, is open-source, and is production-tested.

- The third is the agentic commerce inflection point. The reason to solve this now, before regulatory deadlines force it, is that agentic commerce is about to become large enough that the current trust deficit becomes an economic problem, not just a compliance problem. An agent economy where counterparties can't verify agent identity or authorization can't scale beyond early-adopter communities willing to accept platform-level trust. An agent economy where every transaction is cryptographically provable scales to the same size as the internet.

The companies building agentic deployments today have a narrow window to build the proof layer in as infrastructure rather than bolting it on as compliance overhead later. The stack exists. The standards are finalized. The regulatory timelines are set.

Build the proof layer now, or spend 2027 rebuilding what you shipped in 2025.